Latest update of this article: early 2022 (source code update)

D3wasm is an experiment to port the id Tech 4 engine (aka. “Doom 3 Engine”) to Emscripten / WebAssembly and WebGL, allowing to run games such as Doom 3 inside modern Web Browsers.

For people looking forward to the results, have a look at the Online demonstration right now. Otherwise, you can proceed to the Contents of this article. In all cases, be sure to read the Port Status to be sure about what to expect!

Contents

- Port Status

- Online Demonstration

- Legal

- History and Future Directions

- Source Code - (Latest Updates)

- Technical Details

- Closing Thoughts

- Author and Contact

- Disclaimer

Port Status

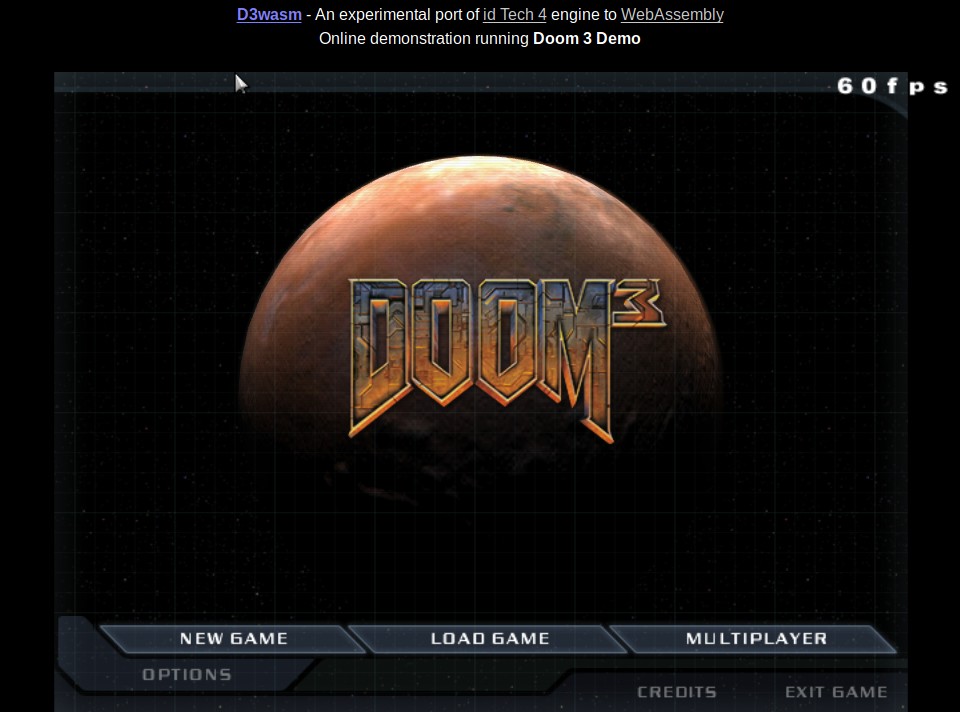

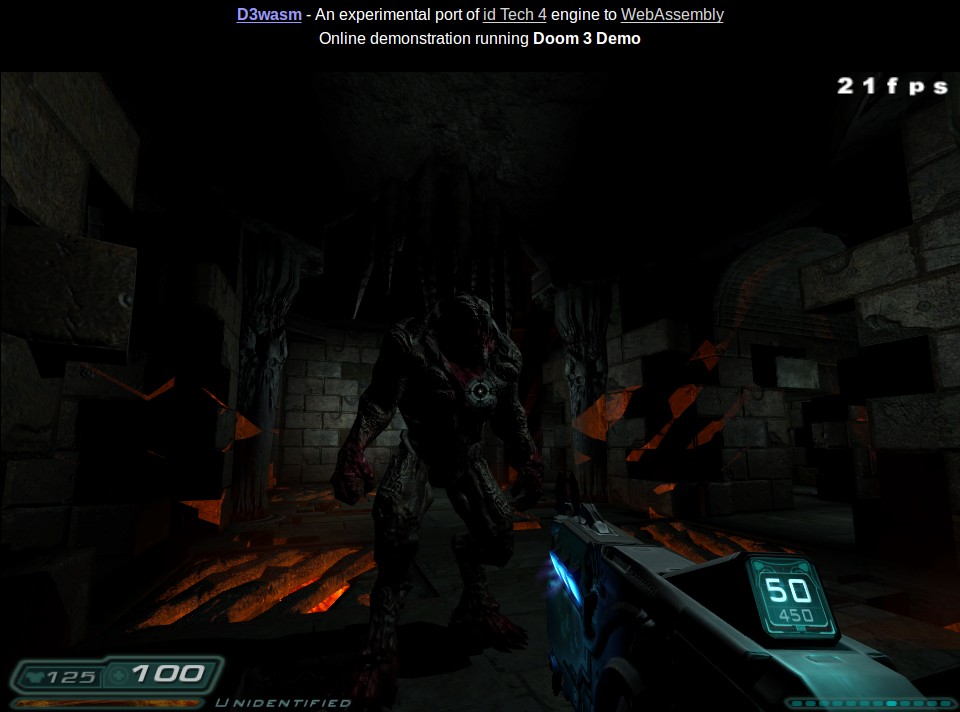

The port is functional, with a reworked backend renderer using the WebGL subset of OpenGL ES 2.0 and GLSL shaders, greatly improved performance compared to the initial version released earlier this year, better game data loading/caching, stability fixes, and local savegames support.

It is working on all major Desktop Browsers: Firefox, Chrome, Safari, Edge and Opera. So it is really possible to play the game and enjoy the nice graphics.

NB: Screenshots have been taken on latest Firefox/Ubuntu 18.04/nVidia binary drivers, with a slight gamma adjustment because the game is actually very dark!

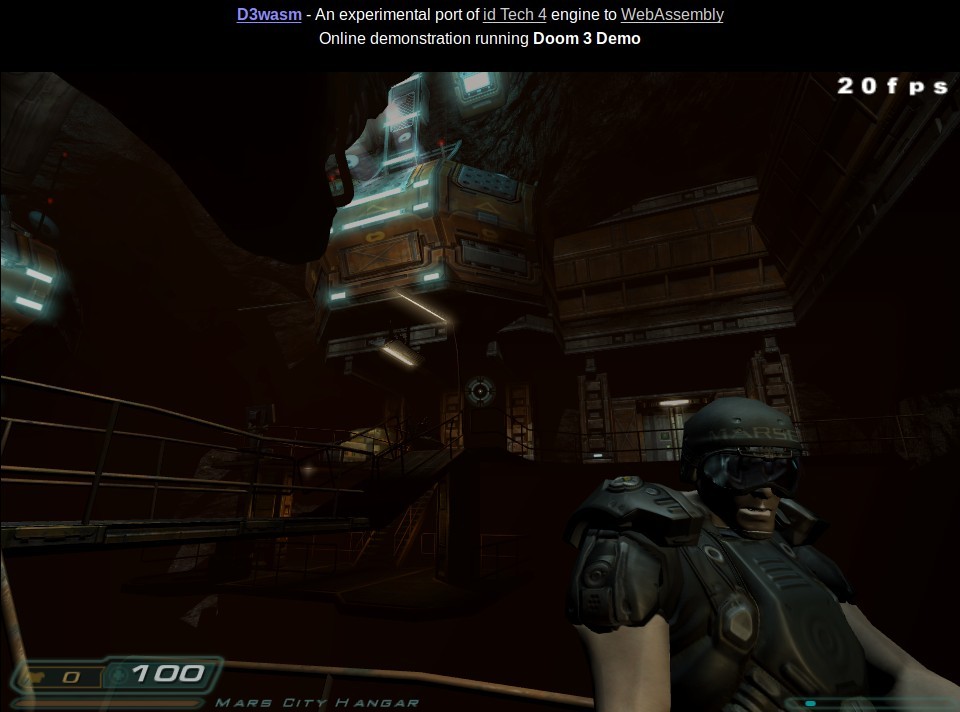

Performance is decent with around 30-40 FPS on a modern desktop system (ranges from 20 FPS in Edge, 40 FPS in Firefox, to 50 FPS in Chrome). The visuals are matching almost exactly those of the original game.

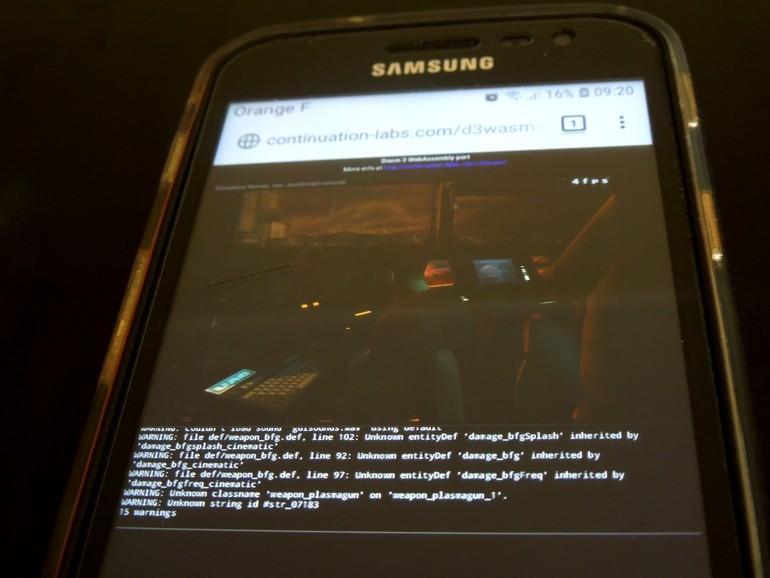

As for Mobile Browsers: your phone will literally burn in Hell ;) More seriously, while the port is working on mobile phones too (at least, it have been tested on a mid-class Android smartphone with Firefox), it is not playable due to lack of touch-based controls, and to be honest, relatively poor performance (though some users reported me that they were able to get 30 FPS too on their smartphones).

Online demonstration

That’s here marine:

http://wasm.continuation-labs.com/d3demo/

It is using the Doom 3 Demo.

Prerequisites

Any modern browser, on any platform, with support for: WebAssembly, WebGL, and IndexedDB.

A good CPU, and 850MB RAM.

400MB of game data will be downloaded, and cached to the IndexedDB browser storage (this is really a one time download requirement).

I did a couple of changes to the idTech 4 engine in order to be able to start the game before all the data have been loaded. So, along with the initial 5MB executable download, there is a first 15MB download to fetch only what is necessary to load the game engine and enter the main menu. Then, the remaining ~380MB are fetched asynchronously.

In the meantime, you can for example tweak your control settings in the main menu (“WASD” configuration by default). If you start a new game before the remaining data have been fully downloaded, the program will simply waits everything completes with a loading screen.

Tidbits

Be sure to have a look at the “Help” button on the HTML page, explaining a few things about key bindings and video resolutions.

The “Esc” key so commonly used in old PC games have been replaced by the “Home” key, as the web is a very different world. This key is used to go to the main game menu, or to skip cinematics.

The “Tilde” or “Backquote” key, used to open the Doom 3 console, might not work. As a replacement you can use the “Insert” key.

There is a new console variable “r_usePhong” (enabled by default). It allows to switch between Phong Shading rendering or the traditional Gouraud Shading rendering (like in vanilla game). You can change it in the Doom 3 console. There is not much difference, both in terms of visual quality and performance, but I do find the Phong style to be a little bit better.

When using Phong shading, you can tweak the specular exponent with “r_specularExponent” console variable to change the shininess of things. For some fun stuff, try to set the exponent to “0”, and discover the Toonish Doom style, reminding me of the Borderlands video game :)

- Note that you can save your game. Savegames will be stored locally in an IndexedDB store (as well as configuration files by the way), and can be reused at a later time.

Common issues

IndexedDB storage will not work if you are in « Private Browsing ». You may disable private browsing safely, as I do not track anything from you.

The game is actually very dark. And for some reason, adjusting the brightness in-game does not work for now. In the meantime, if necessary, you may adjust your screen contrast/brightness at the system level, or on the screen directly .

On the other end, on Chrome under Linux, there might be a very unusual gamma/color profile that makes « darkness » to be too bright and not good looking. To solve that issue if it happens, you have to explicitly force a color profile. Look in chrome://flags/ for « Force Color Profile » option, and choose « sRGB ».

Legal

The port itself is based on the GPL source code release of Doom 3, and the online demonstration is redistributing the game data coming from the Doom 3 Demo (D3Demo.exe) available for download freely on the Web (Fileplanet). This demo have a specific licence.

So, to do my best to comply with legal requirements:

- The source code of the port is available to the public, as required by the GPL. See Source Code.

The license of the demo is located here: License.txt, as required by the license itself.

According to section 3. Permitted Distribution and Copying of the license document, redistribution is authorized providing it is not for commercial purposes (which is the case here, being an experimental project), and the licence file is made available to end users.

I nevertheless contacted Bethesda/Zenimax (owners of Id Software now) to ask for a more explicit authorization. However, I never got any replies from them, even after a few contact attempts. Either they simply don’t care (this is a 15 years old video game, and this is only the Demo of the game), or they prefer to not answer for unknown reasons.

At least, I tried to be fair and to get in touch with them. Maybe this will boost a bit their Doom 3 sales, who knows ? I’d be curious.

Anyway, if you enjoyed the Demo, you can find the full commercial version of the game on various distribution platforms.

History and Future Directions

This project is the last step of a series of game porting experiments to WebAssembly, with the goal to demonstrate that as of 2019 it is possible to run very large and demanding C++ programs inside Web Browsers.

By the end of 2018, after having successfully ported the Arx Fatalis video game to WebAssembly (see the Arxwasm project), I had the idea to go to the next level and port something that would be really impressive. Doom 3 immediately came to my mind. At the time the game was released (2004), it was really bleeding-edge technology in terms of game engine and graphics, and could put a lot of Desktop systems down to their knees. Even by nowadays standards, the visuals are still good looking. Additionally, the game code being open sourced and known to be of good quality (NB: I can confirm this), it was definitively a good candidate.

After a quick look on the Web I realized nobody ever had this crazy idea. And honestly, I could understand why: I was thinking myself it would be not possible.

So I decided to do it, because I wanted to know, and I enjoy to do impossible things.

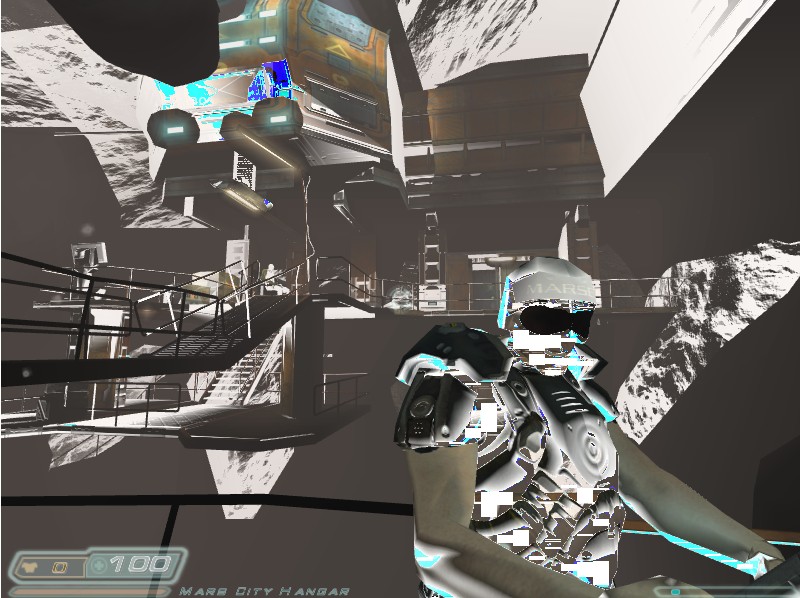

This turned out to be an incredibly difficult but rewarding task, and by early January 2019 I finally got a first version working. That version was far from being optimal: poor performance, and weird looking visuals (using a legacy rendering path without shaders support). But as I was very happy to succeed, I nevertheless decided to write a post on the Emscripten forums about this.

NB: compare with the screenshot of the same scene in the Port Status section. You’ll notice a big difference in visual quality and FPS.

NB: compare with the screenshot of the same scene in the Port Status section. You’ll notice a big difference in visual quality and FPS.

The reaction to this post was not expected: the news immediately bumped to Twitter thanks to a well-known WebAssembly Twitter account, and then, it ended on top of Hacker News. My cheap hosting server did not resist the Slashdot effect that came afterward…

With so much interest, I decided to go even further in the porting process, and to fully rework the renderer backend for WebGL (to have the nice bumpy graphics and good performance), to enhance the way data files were fetched (people complained a lot about the long loading times), and a lot of other things.

The result of this effort is the D3wasm project.

There is many remaining things to do obviously. For now, my plans are to use the project as a sandbox to continue further experiments with WebAssembly: investigate Offscreen rendering, multi-threading with Web Workers, find smart ways to handle data loading/streaming, and reuse the engine for non-gaming purposes (architecture, CAD, virtual tourism, etc.).

Source Code

The source code is available on Github:

https://github.com/gabrielcuvillier/d3wasm

It is a fork of the dhewm 3 source port, maintained by Daniel Gibson (Twitter). This fork is itself a fork of the Doom 3 GPL source code, released by id Software a few years ago.

The project is using Emscripten, led by Alon Zakai (Twitter). It allows to compile LLVM bytecode to WebAssembly, and provide all what is necessary to have a C/C++ runtime environment on the Web. A lot of Kudos have to go to the people involved in that project, their work is impressive!

Initially the port was based on Regal GL library, developed by Cass Everitt / nVidia and then later by Google. This library is a GL 1.x emulation layer on top of OpenGL ES 2.0. However, this project has not been active since a couple of years, and required tweaking for the game to run (NB: a specific fork have been created for that purpose, and released as an Emscripten port). I finally decided to remove that dependency by migrating the D3 backend renderer to WebGL.

Some parts of the project were initially based on other Doom 3 forks, such as Dante (mostly for the main light interaction GLSL shader), with a bit of inspiration from fhDoom (the fog and blend light shaders). As the first project was in an unfinished state (lot of missing shaders, and it did not compile), and the later applies to BFG edition of the game only (which is very different, believe me), eventually, I finally did a clean-room rewrite of the renderer backend for WebGL.

The code is not yet cleaned up yet, and some parts are really not good looking. That’s why there is no additional info on the internals for now. There is build instructions though, for those who dares to test.

Please note that many things have been removed from the original D3 code base, and in particular, I removed most of the embedded in-game D3 tools and compilers, renderer debug tools (“r_show*” console variables), MacOSX and Win32 specific code, legacy ARB2 renderer path, and asynchronous network server code (while still keeping the network client code). It is really a WebAssembly specific game runtime now.

I nevertheless kept the ability to compile/run it on Linux for the purpose of doing fair comparisons between Wasm and Native builds (more info on this in the Technical Details section).

Latest Updates

February 2022

- Minor updates to enable compilation with latest versions of Emscripten (v3+):

- 35% smaller binary size (with -Oz optimization flag), 50% better performance. Nice!

August 2019

- Updated online demonstration to v0.4:

- Version compiled using Upstream LLVM backend + new ASYNCIFY/ASYNCIFY_WHITELIST option: 10% smaller binary size, +5-10% better performance.

July 2019

- Migrated to Emscripten Upstream LLVM backend. The online demo is not yet updated, as there is still an issue regarding binary size which is quite bigger than with Emscripten Fastcomp backend.

- Fixed a couple of minor internal bugs/issues

- Added scripts to package the game data, and Build instructions

- Updated the performance numbers in the article (it works better with latest browsers)

Feb 2019:

- Updated online demonstration to V0.3:

- GLSL renderer optimization / performance improvements, and fixed many stability issues

- Implemented the missing Blend Light shader

- Support for “TimeDemo” command, to do some benchmarks (full version of the game only though)

- Better handling of the “Esc” key issues (replaced by “Home”)

- Updates to better support changing video resolution

- Re-allowed Fullscreen mode (partial: this is just canvas rescaling)

- Enhanced HTML shell, including an additional help section

Jan 2019

- V0.2 release, with full WebGL render backend and GLSL shaders

- Initial version of this page

Dec 2018

- V0.1 release (legacy GL 1.x renderer path)

RAM usage is so big because the whole data files needs to be stored in memory. This is due to the way browsers expose filesystem possibilities, which is not far from nothing.

As so, the 850MB memory requirements is split like the following: 384MB allocated for the program itself (yes, it’s big, but we are running at Ultra quality settings) + 400MB needed for the data files + 64MB to store additional files (configs, savegames, screenshots, etc.).

Yes… all in the RAM.

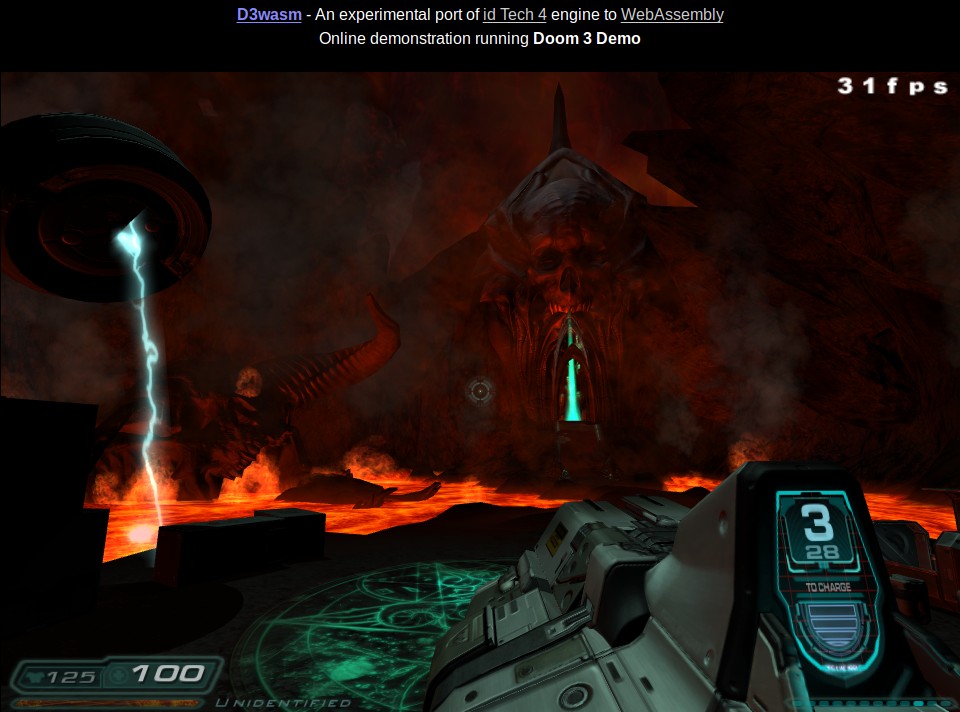

It is possible to run the full version of the game with the port. This is working fine, and I played a couple of levels like this, such as the famous “Hell” level, which have all the best visual effects of the game.

The only requirement is to be psychologically OK to have a web page crunching 2GB of RAM… with 1.7 GB just for the game data “stored-in-RAM-as-filesystem”. And you also need the full game too, which is not provided with the online demonstration for obvious reasons.

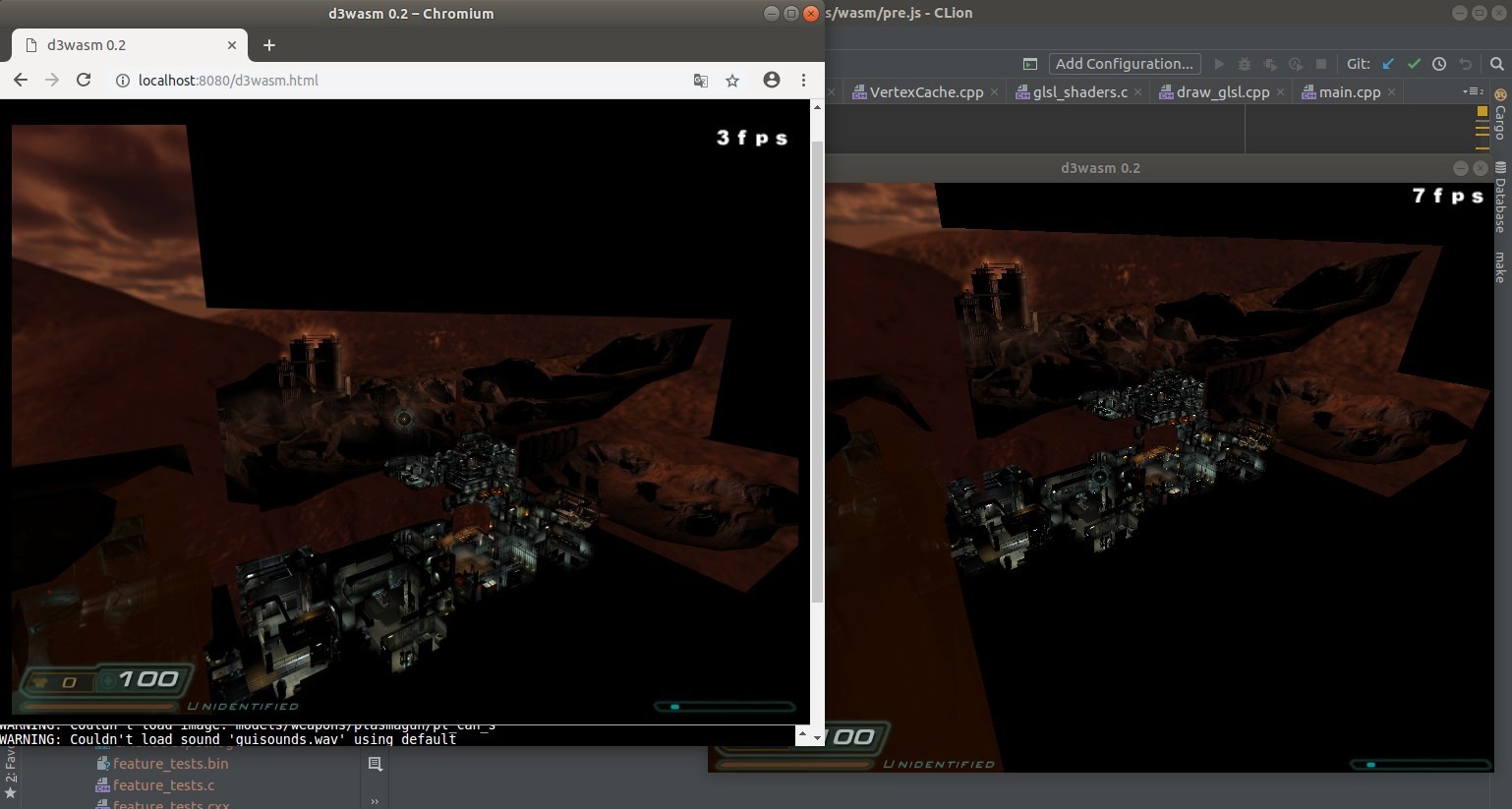

- 7 FPS on Native to 3 FPS on Wasm using Chrome (and 2 FPS using Firefox).

- 30 FPS on Native, and 15 FPS on Chromium (and 10 FPS on Firefox)

- General light interaction (diffuse/specular/bump/light projection)

- Ambient surfaces (standard light-emissive surfaces, skybox/wobblesky cubemapping, reflection mapping)

- Stencil Shadow volumes

- Fog and Blend Lights effects (mostly used in Hell level)

- Z-buffer filling, with or without clip planes

- Bumpmapped reflection mapping (replaced by classical reflection mapping in the port). Very minor visual difference.

- Heathaze effects. Kind of “heat” effect. I did not figured out what the original ARB assembly shader are trying to do.

- A couple of effects actually not used in the original game: mostly “Glasswarp”, and “XRay” effects.

First, I started to fix the existing port of the Regal GL emulation library to Emscripten / WebAssembly (which had an unfinished status), and re-integrated the original fixed function pipeline renderer path of the game (that had been removed from the dhewm port).

This is what can be seen in the earlier version of the port demo that have been released. Doing that way allowed me to first “validate” the game could run on the web without having to first rewrite the backend. This was done at the cost of ugly graphics and poor performance… but in the end, I was able to get Doom 3 running on the Web.

The fixes I did on Regal GL emulation library eventually became an Emscripten port, accepted by the Emscripten team. Changes are very low-level to explain in detail here, but this mostly involved debugging the shader generation code that was somehow broken due to bit-rot.

Note that the Emscripten GL 1.x emulation library could not be used in this project because D3 uses some advanced functionality of GL 1.x, such as Texture Combiners (GL_COMBINE_ARB). However, this emulation library is working well for most of GL code I used so far.

Then, I started to integrate GLSL shaders as replacement for the fixed-function rendering passes (light interaction, then shadows, then ambient surfaces, etc.). So for some time, there have been two GL drivers cohabiting in the same code: the legacy renderer path with Regal library (using ES 2.0 / GLSL behind the doors), and the new ES2.0 / GLSL renderer path. On the code side, it was a total mess, but that worked.

Finally, once all rendering passes were converted to GLSL, I replaced all the remaining GL fixed function pipeline code with OpenGL ES 2.0 code. At that time, I was able to finally drop Regal and had an OpenGL ES 2.0 backend.

- So, as a last step after having removed Regal GL Library, I reworked the VBO code of idTech 4 so that it would work with WebGL directly (ie. rework of the “VertexCache” class), and in turn, I could remove the ES2.0 emulation layer.

Here how it looked the first time I got something on the screen:

It took me a full day to find out that it was because of that “premultipliedAlpha” option of WebGL context, enabled for some reason… Instead, I was looking for some bug deeply in the code of Regal GL library. Aw…

Last but not least, to talk again about the game, did you know that almot 2% of the game time is done in the idPluecker::operator[] ?

Like me, you would feel less dumb by not reading the Wikipedia article on the Pluecker Coordinates and Pluecker Embedding

Excerpt:

“In mathematics, the Plücker embedding is a method of realizing the Grassmannian Grk( V ) of all k-dimensional subspaces of an n-dimensional vector space V as a subvariety of a projective space. More precisely, the Plücker map embeds Grk( V ) algebraically into the projective space of the kth exterior power of that vector space, P ( Λ k V ). The image is the intersection of a number of quadrics defined by the Plücker relations.“

WOW, is it me or ?

- On the WebAssembly side:

- On the Gaming/Apps side:

- On the Legal and Business side

Technical Details

There is a lot of things to say. Here is a few in random order:

RAM usage

Benchmark between WebAssembly and Native builds

It is possible to compile the port to a Native Linux build, using almost exactly the same code base (= no multi-threading, GLES/WebGL usage, etc.). This allows to do some “fair” comparison between the WebAssembly version and a natively compiled version. So here is the point:

On average the WebAssembly/WebGL version of the engine is between 40% and 60% slower than the Native/GLES compiled version.

The following screenshot shows a side-by-side comparison of the same “stress-scene” (noclip mode, showing the whole map) under Wasm (on Chromium) and Native builds, using the same code base.

With the same scene, we can disable most of the backend renderer interacting with GL (“r_skipRender 1” and “r_skipGuiShaders 1” in the D3 console), only the front end renderer is running. This front end is CPU only, and does portal visibility / lighting computations amongst other things. The results are (no screenshots, as render is black of course!):

So when not GPU bound, it’s about 50% slower. I assume Firefox just lacks some optimizations to match Chrome performance.

While it might sounds to be a big performance loss, I personally find this to be exceptionally good given the fact we are running in a sandboxed VM, with probably some browser runtime overhead (GC, the DOM, etc.), Javascript functions and various Polyfills always lurking around to bite you silently, and the technology is not yet 100% mature.

This is in all cases way better than just Javascript, for which synthetic benchmarks generally shows there is on average a 6x performance difference compared to native C++, moreover with huge variability in the results: ranges from 4x in the best case to 10x in the worst case (see Programming Language Benchmark game). Basically, by using WebAssembly/C++ instead of Javascript, we could move in theory from a 6x performance loss to a 2x one only compared to a native C++ program.

Put it in other words, while WebAssembly/C++ is 2x slower than Native/C++, it is nevertheless 3x faster than pure Javascript. So the point is: there is in theory a 3x performance boost waiting us ahead on the Web. But that’s for the theory.

Mobile performance issue

The mobile performance is really disappointing though (3-5 FPS on mobile vs 30-50 FPS on desktop).

NB: sorry for low quality shot. The point is the 4 FPS on the top right.

It means there is almost a 10x performance loss between my modern laptop system and my modern smartphone. But I think it is not because of WebAssembly, but simply because CPU/GPU chipsets of smartphones are really slower than we would want them to be (and ARM chipsets vendors marketing would also want them to be). This explains why mobile Web is so sluggish.

For the fun, “let’s compare apple and oranges using empirical benchmark results”: if there is 10x performance loss between desktops and smartphones, and 6x loss between Web apps and Native apps, we are close to the 60x performance hit between a C++ native application and a Web App running in a mobile browser.

Now, THAT’S a real gap, isn’t it ? ;)

More seriously, if we put this fun and not very accurate comparison into perspective (read: situation is probably even worse…): WebAssembly could really give a serious performance boost on mobile web.

So, here is a Pro-Tip for your next Startup: design a hardware WebAssembly CPU (WPU?) to boost things close to native speed, and sell this to ARM or Intel. Maybe this is the next big thing.

EDIT Feb. 2019: it turns out some people already started to work on this: see the wasm-metal project. People are crazy, and that’s a good thing for innovation.

SIMD code

Based on profiling, almost 25% of the game time is done in low level Vector/Maths operations. These functions have various SIMD backends (for SSE/SSE2/SSE3/etc.), but none of these backends are supported by WebAssembly. As so, the generic C++ backend code is used.

Multithreading

Multithreading have been completely removed from the engine, because it is not yet well supported by browsers.

Early 2018, following the “Spectre” and “Meltdown” security issues, major browser vendors decided to disable the “SharedArrayBuffers” feature in their engines as a temporary security measure against these potential attack vectors. Sadly, these shared array buffers are needed for mutex/lock-based multi-threading in WebAssembly.

This will eventually change with time, Chrome started to re-enable things lately, and it is possible to enable them again on Firefox by tweaking flags. But I don’t like the browser-flags-based Web idea, and security is something we can’t decide to trade for the sake of performance or ease of development.

“De-multhreading” a multithreaded app is somewhat tricky, but I eventually succeeded. It consists of manually executing the threads specific code from the main loop. This have to be done in a smart way for things to work and get in sync.

This do have an impact in the game though, but not on the performance side as you would guess. The game have a single-threaded renderer, and is using threads mostly to accurately update a timer at 60hz (which the various game subsystems will use on a regular basis), to handle Audio asynchronously, and to have things not locking too much other unrelated things.

And here is the pain point: issue is more about things starting to “hang” a little bit the game. For example if you try to run a long command in the D3 console, this will now hang until the command completes, or if the FPS are going too low, audio will start to jitter/suffer because rendering and audio mixing are both done in the main thread.

Renderer backend features

On the OpenGL backend renderer side, it have been rewritten/adapted for the WebGL subset of OpenGL ES 2.0 and GLSL. The visuals are almost matching those of vanilla game.

The following GLSL shaders have been implemented:

On the screenshot: the Blend Light effect, which is a kind of local foggy light. Such Evil.

The following shaders are NOT implemented:

Also, I had to disable compressed textures. I was having too much trouble to load the precompressed DDS files into WebGL. There is room for improvement here, as I am sure compressed textures would increase performances on the mobile side.

There is a glitch with some post-processing effects, such as the “Berserk” mode (“give berserk” on D3 console). I still need to figure out what’s happening.

Finally, the engine is now fully based on streaming geometry to Vertex Buffer Objects (VBOs) with no client-side memory rendering, as required by WebGL.

In the code, shaders implementations are in the “neo/renderer/glsl” folder. Each fragment and vertex program is a separate C++ file that embeds GLSL code using the C++11 raw string literal feature. While it might looks weird at a first glance, in practice, this is a very handy way of embedding custom text data in a C++ application.

Most of the backend renderer is in the “draw_gles2.cpp” file, along with important changes in “VertexCache.cpp” that been updated to match the VBO-only policy of WebGL.

Renderer backend rewrite history

The rationale behind the rewrite of renderer backend is that initial D3 backend used either the GL fixed function pipeline for cards without shader support (visuals were not good), or the dreaded “ARB Assembly Shaders”. None of them are supported by WebGL.

So, for the purpose of porting the engine, I did the backend update in four steps:

But that’s not all! Under all of this, there was another hidden GL emulation layer.

My renderer path used OpenGL ES 2.0, but what browsers really want is WebGL. There is subtle but important differences between the two, most notably the fact that no rendering can be done from client-side memory in WebGL. Everything have to be done using Vertex Buffer Objects (VBOs), including triangle indices that needs to be in separate VBOs than vertices. While idTech 4 did have support for this, it was only partial. Many parts of the game were still using rendering without VBOs (interactive GUIs notably), and reusing VBOs either for vertices or indices without worrying.

So, I first had to enable the Emscripten OpenGL ES 2.0 emulation layer to properly handle the OpenGL ES 2.0 code that would have not worked on WebGL. It means that at some point in time there was in fact a piles of layers for OpenGL: “Regal GL 1.x emulation layer” working on top of the “Emscripten OpenGL ES 2.0 emulation layer” working on top of browser’s WebGL implementation (probably working itself on top of something else…).

… And finally, that worked! From Legacy GL 1.X / ARB Assembly Shaders to WebGL renderer backend, there is a very long and tricky story. No more piles of layers, now with nice “bumpy” graphics, and a 2x performance boost on the renderer side.

By doing the transition that way (piles of GL emulation layers, progressively stripped down), my goal was to never stay for too long with a non functional renderer. This is because “Coding in the Dark” (= having broken code base for too long) is a very stressful thing, as you can’t know if your time investment will eventually succeed or not. By always having something working - even at the cost of temporary code mess and time loss - I was able to mitigate the risk of failure and to stay more confident in the project outcome.

Game data streaming

This is important topic, and a mandatory requirement for successful WebAssembly gaming. This is not yet quite OK with the actual port, while this have been somehow enhanced. I’ll talk about this later.

Networking

Networking is a completely unaddressed topic. Server code have been removed, and async client code is still there, but disabled. I skipped this because Multiplayer is not available in the Demo version of the game.

Converting sync code to async code

The web have something really painful for us native C++ programmers. This can be summarized with the following drastic rule:

“Thou shalt yield to your Grandmaster the Browser. Nuff’ said.”

Repeat this to yourself 10 times a day.

If you don’t do that, things will start to hang, the browser will start to complain, audio not getting played, canvas and graphics not updated, things not downloaded, users will get angry, poor kittens will get beaten, Hell will invade Earth with horde of demons, and it’s literally the end of the world !

O_o

Thankfully, Emscripten provide some helpful ways to handle this from our Native/C++ view of the world, which is similar to we are in control and the OS does it’s best to be forgotten (compare and contrast this sentence with the previous drastic rule of the Web).

The first and most important is a functionality to properly handle the main loop of your application to yield to the browser at each loop iteration. Just some rewrite of the main loop is needed, and 75% of issues are solved.

However, sometime, there is things in the main loop that can occasionally takes time, such as loading a level or fetching data from the network. And here, the pain begins, because if you don’t do anything, everything will start to hang and even your shiny “loading screen” will not be shown to the user.

EDIT Jul. 2019: the following paragraph is deprecated now, as I use ASYNCIFY instead of EMTERPRETER.

There, you have the choice: either you rewrite your app to split these long tasks into short iterations that can yield on regular basis (read: maybe not possible), either you use some really interesting functionality provided by Emscripten: The Emterpreter. I’ll don’t go in the details of this now (and sadly the doc is somehow not clear about what is the purpose of that functionality), but when I finally understood the point, it is technically something that really blown my mind.

Just remind this: if you need your native C++ code to arbitrarily yield to the browser - and restart later at the yielding point - seriously take a look at the Emterpreter. While it may sound not clear for now, you’ll definitively understand the need of this once you will be in front of what I now call the “yielding problem”.

For sure, 99% of C++ programmers on the Web doing non-trivial programs will be confronted to this issue.

The effect on the D3wasm port is that now, when you load a level, there is a loading screen and the progress bar is being updated. Sounds dumb ? Of course it is! But getting this simple thing to work was a lot more complex than I expected. Seriously.

Amount of work

It took me roughly 7 weeks almost full time to complete the port.

A couple of days to get it to compile, 1.5 week to get it running (removing multi-threading, lot of tweaking, debugging, and finally hearing sound for the first time… with a black screen obviously), 2 week to get to the fixed function pipeline version working (including a lot of Regal GL library debugging), 1 week to fix the issues of “sync vs async world” and to enhance the data loading/streaming, and 2 weeks up to the actual WebGL/GLSL renderer.

And a couple of days to write this TL;DR article. Sorry for that :)

Half of that time was spent on just understanding things and figure out how to address the problems. The other half on coding.

Honestly, this proven to be an exceptionally hard task. From day one until a couple of days before the first version, I was not sure I’ll eventually manage to get something working. On average I gave up at least once a week. But short walks are one of the developer’s best friend (along with good coffee), so I finally pursued to the end.

This was also a very interesting and rewarding experience, having learned quite a lot of things.

Trivia

Closing Thoughts

WebAssembly is a really interesting technology, and something to seriously consider in the near future.

However, it is also a quite demanding one for developers. You have to know very well native applications programing with its “blocking and multi-threaded world”, and very well the Web platform with its “async and single-threaded world”.

The major issue is that both worlds are usually in contradiction with each other, and that make things difficult. In the end there is a lot of “things to know well”, and a huge L3 cache is needed in the brain to handle all of this at once. When I see that people are trying hard to involve Rust in that ecosystem, I think that would be probably too much to grasp for the average developer and project.

On the other side, being able to use other programming languages than Javascript in the client-side Web ecosystem might be a good thing. It’s been a long time developers are waiting for something really designed for the Web. DartWasm anyone ?

Being able to play a video game by just visiting a web page (no installation, etc.) is an interesting technological breakthrough. There is more and more evidence, such as this project, leading to think that technology is almost ready to support this.

By extension, this is not only true for Games, but for Apps too.

In the future, I would not be too surprised to see major software distribution platforms to experiment “Walking on the Wasm side”.

Legal and Business implications of technological (re-)evolutions are an interesting topic also. Technology is fast-moving and disruptive sometime, so much that even those aspects can be challenged.

Big companies and IP holders tends to have a very conservative/defensive stance towards technological evolutions that may affect how their IP are distributed and making revenues. For example, the music/video streaming services as we know today have seen years of legal battles between IP holders and distribution platforms.

In the case of Apps, I’d be not surprised too to see future “game/application streaming services” involved in similar battles. The usual cycle of “Buy / Download / Install / Run / Uninstall” would be replaced by just a Start button and a monthly fee to access a full library of games or apps.

Would a developer/publisher agree on this ? How would it be monetized ? By number of “starts”? By effective in-app time ? Here is a few interesting and unanswered questions.

Author and Contact

The author of this project is me, Gabriel Cuvillier, on behalf of my R&D Lab Continuation-Labs.

This is an experimental research project, for the purpose of learning WebAssembly, demonstrating what can be done with that technology, and making the Web move forward.

You can reach me at gabriel.cuvillier@continuation-labs.com

Disclaimer

The D3wasm project, this website, the Doom 3 Demo online demonstration, me, and Continuation-Labs are in no way associated with or supported by id Software, Bethesda Softworks or ZeniMax Media Inc.

DOOM, id, id Software, id Tech and related logos areregistered trademarks or trademarks of id Software LLC in the U.S. and/or other countries. All Rights Reserved.

WebAssembly is a standard being developed by the W3C.

The D3wasm source code is a derivative work of the General Public License release of Doom 3.

The cheesy D3wasm logo is a derivative work of the WebAssembly logo, covered by CC0 license. Ok, I could have done it better…